Recently, a poster on reddit asked how to get into offensive security as a student studying Computer Science. Before the post was removed, the poster expressed an interest in penetration testing or reverse engineering.

I studied Computer Science at different schools (BSc/MSc/Whateverz). This is timely as a new semester is about to begin and students still have an opportunity to change their schedules if needed.

Offensive security is multi-disciplinary and people come into it with different backgrounds. Any background you master will equip you to become a useful contributor. Studying Computer Science (or even having a degree in the first place) is not the only path into this niche of security.

If you want to milk your Computer Science education for offensive security skills, here are my tips.

In general

You should learn to program in a systems language, a managed language, and a scripting language. Learn at least one computer architecture really well too.

Programming Languages

Many schools will give you the opportunity to learn Java or C#. This will check the managed language box. I’ve used Java to develop graphical user interfaces and to write middleware for distributed systems. You may find Java and C# aren’t interesting, that’s fine.

For the systems language side, take a course that will teach you C. I prefer C over C++. Working in C will force you to cast blobs of memory into different structures and to use function pointers. C will help you develop a mental model of how data and code are organized in memory.

Python and Ruby are the preferred scripting languages in the security community. I lean towards emphasizing Python over Ruby. There are a lot of great libraries and books [1, 2] on doing security stuff with Python.

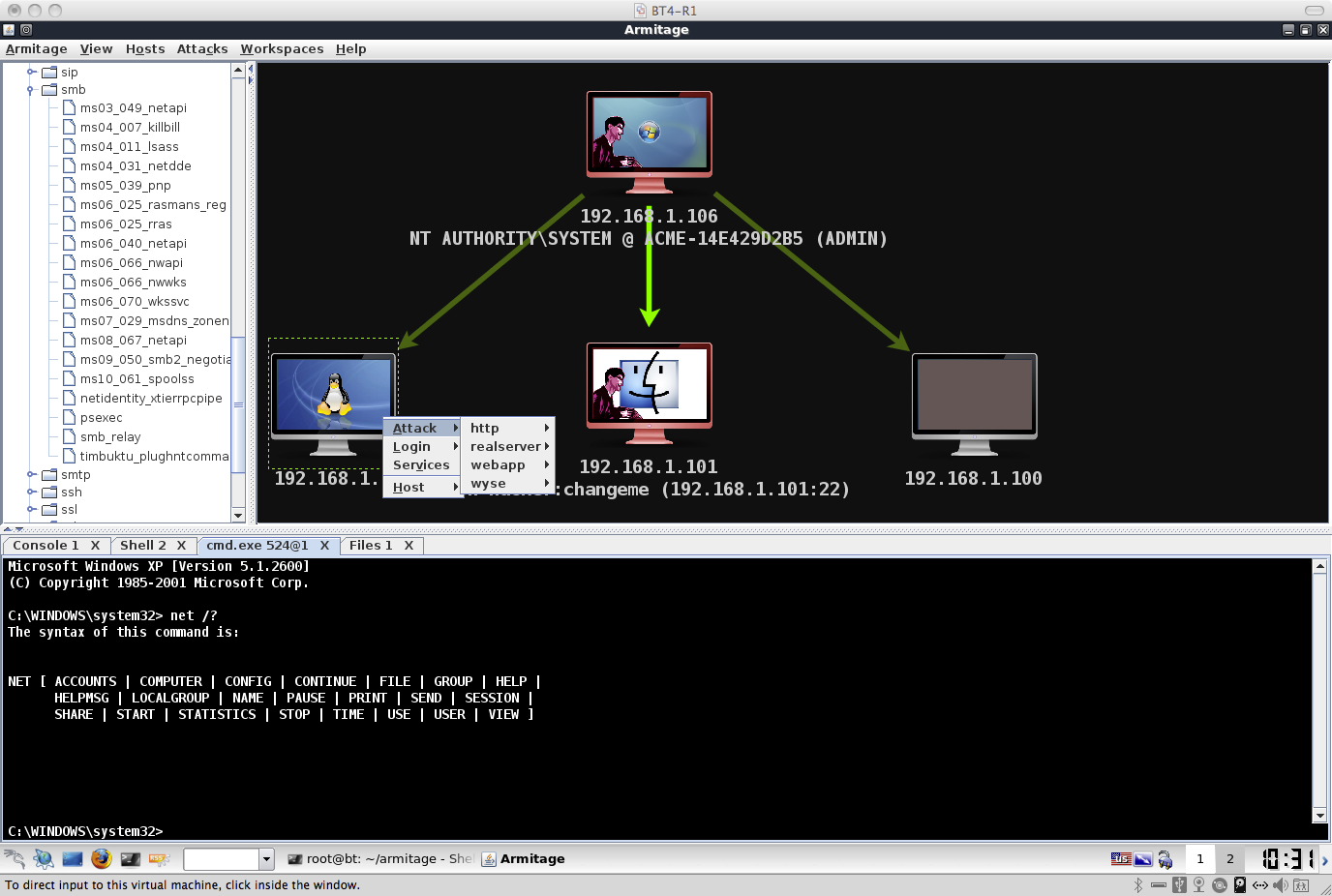

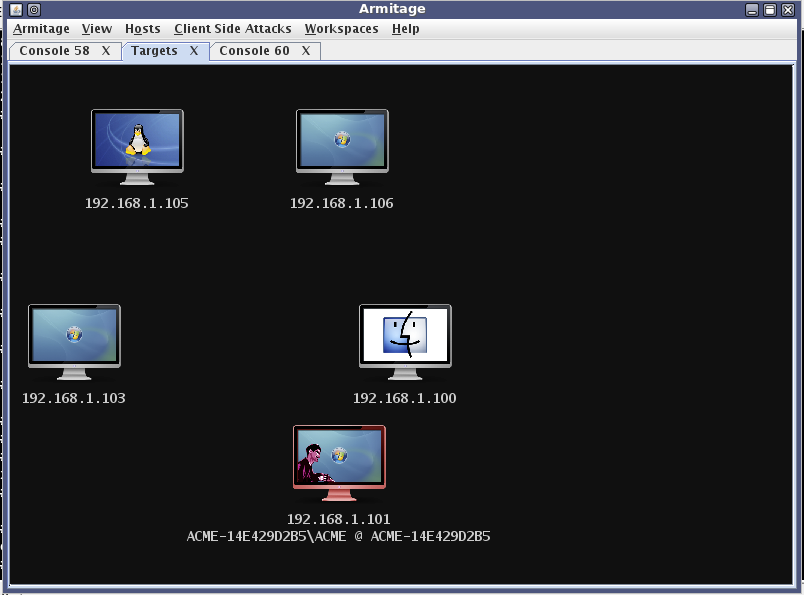

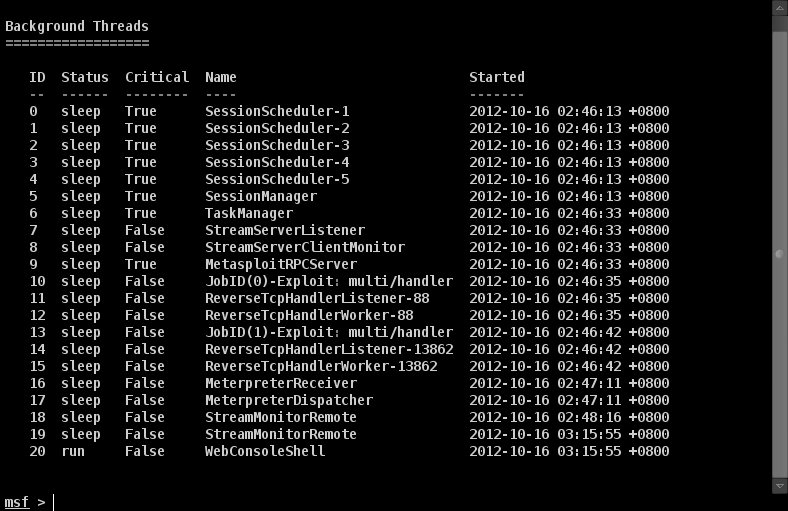

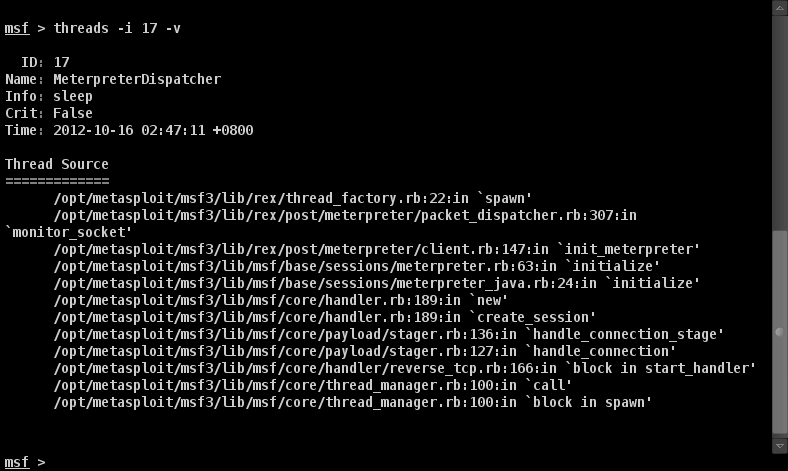

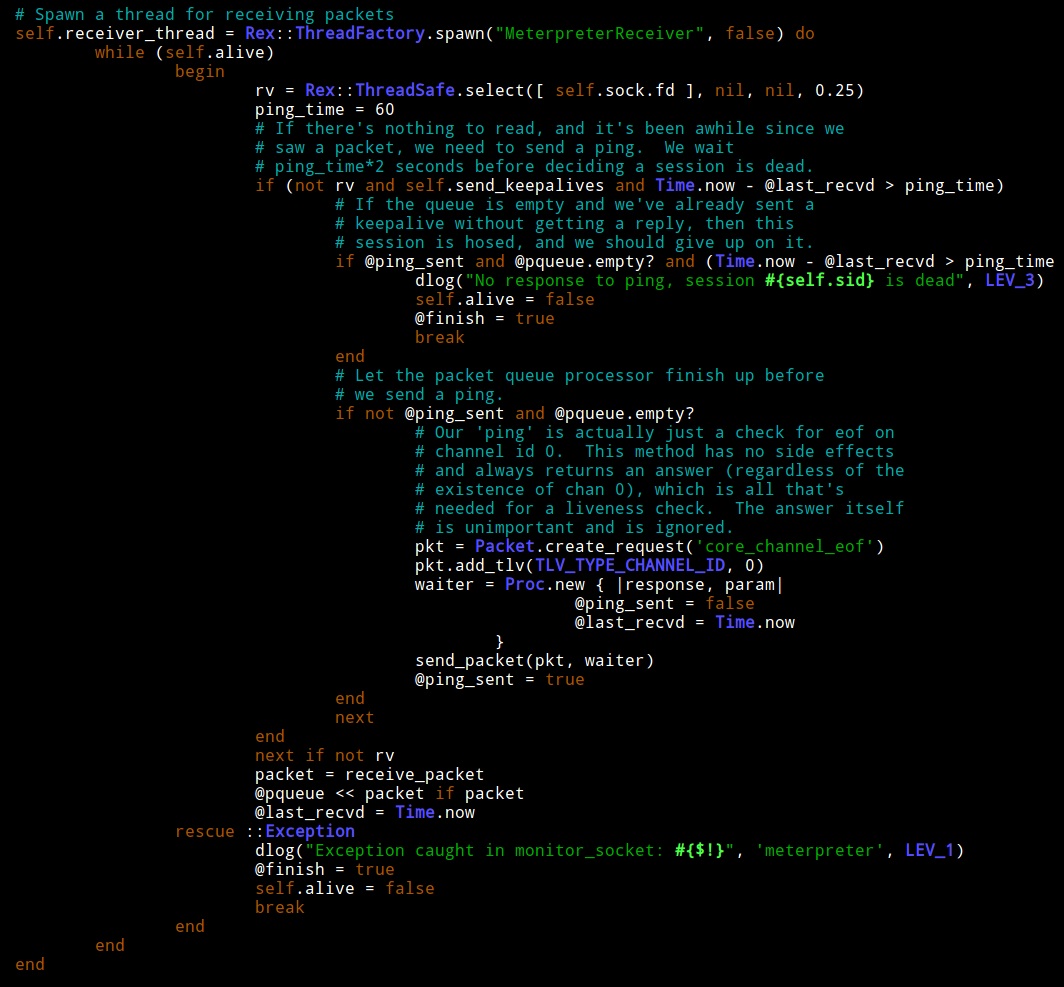

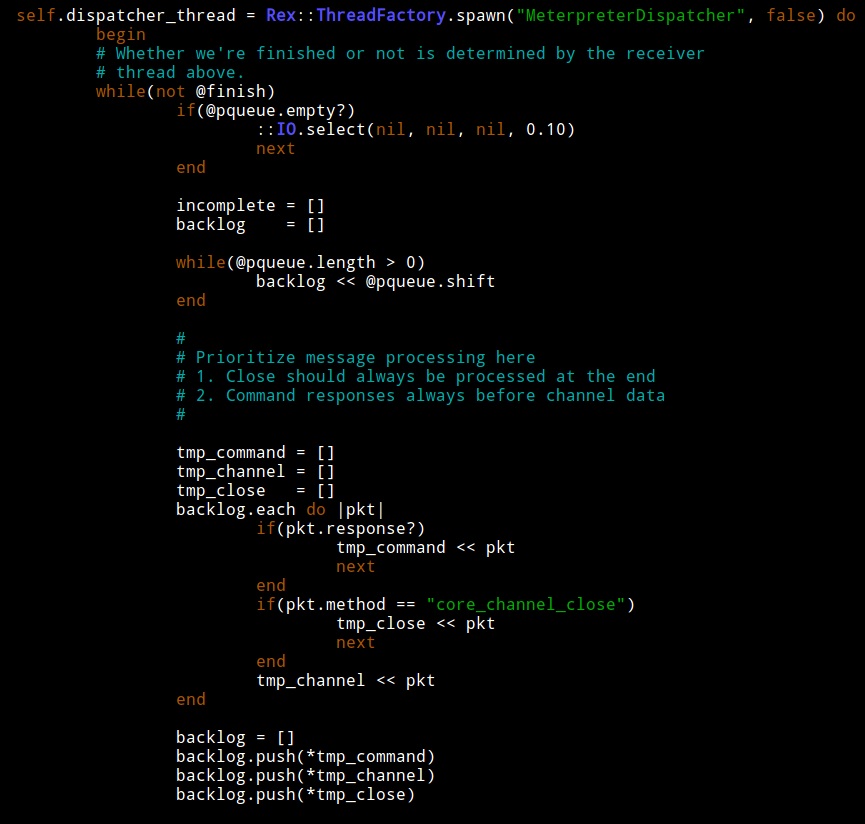

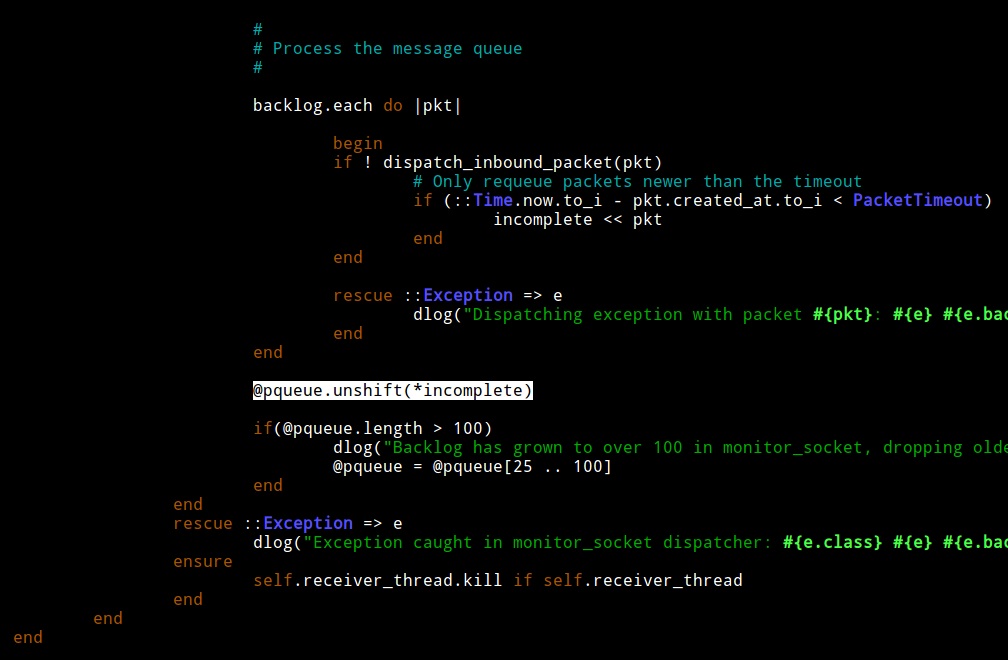

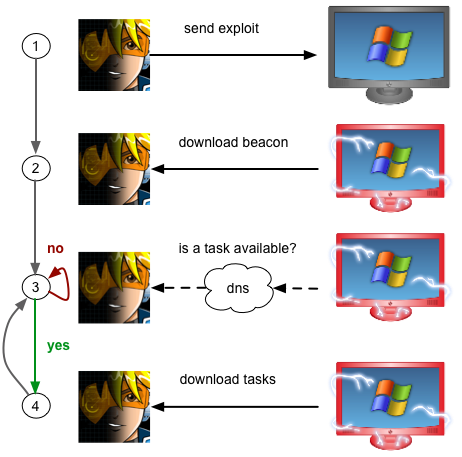

If you want to tinker with the Metasploit Framework, your best bet is Ruby. Ultimately–pick a project and use that as an excuse to master a language or tool. This is how you will acquire any skill you want (during and after college).

Operating Systems

Take an operating systems course and the advanced OS course if you can. Usually these courses require you to work in a kernel and do a lot of C programming. Knowing how to work in a kernel will make you a better programmer and teach you to manipulate a system at the lowest levels if you need to.

After a good first course in operating systems, you will know how to program user-level programs, understand which services the OS provides you, and ideally you will have modified or extended a kernel in a simple way.

Take a compiler construction course to follow up with an architecture course. By the time you get through architecture and compiler construction, you will know assembly language for a specific architecture and how to use a debugger really well.

One note on the above: some CS departments offer watered down versions of these courses. They may force you to work in Nachos instead of a UNIX kernel. If this is the case, see if your school’s EE department offers an equivalent course that teaches skills tied to real systems.

Theory is Cool Too

Again, this is a very systems centric slant on CS. The theoretical side has a lot of opportunity too. Some universities have courses on formal methods for software engineering, model checking, and the like. There’s some great work happening in this area. Read Ross Anderson’s Security Engineering book to see if anything stands out and try to map it to a course.

To appreciate how broad security research is, read the list of DARPA’s Cyber Fast Track awards or go through the papers published at the USENIX Workshop on Offensive Technologies. You’ll see both the systems side of CS and the theoretical side making appearances in both of these places.

Don’t Expect This…

Active Directory administration, configuring Cisco routers and firewalls, using hacking tools, and other practical system administration skills are not usually covered in a CS curriculum. Be ready for this. If this is what you want, there are some good programs on Systems Administration and you may want to consider a switch.

Also, it’s not common for computer science departments to teach courses in web application development. If you want to learn a web application stack, you’ll need to take courses in another department or learn this on your own.

Independent Study

If you get through the foundational material and find yourself hungry for more, try to arrange an independent study. I like independent study. It’s a chance for you to work on your own and produce something to prove you’ve acquired a skill or mastered a process. If your independent study produces open source or a useful paper, you may find the independent study boosts your career more than an academic transcript ever will.

Let’s say that you’re stuck and do not have a project idea for an independent study. That’s fine. Take a look at courses offered by other universities. See if there’s a way to tailor the course content and projects into a study plan that a professor at your university may supervise.

Since you’re interested in offensive security, here are my two suggestions:

NYU Poly offers an Application Security and Vulnerability Analysis course. All of the lectures, homework, and project materials are available on the website. If you want to learn how to find vulnerabilities and write exploits, you could work through this course at an accelerated pace and spend the rest of the semester on a final project.

Syracuse University publishes the Instruction Laboratories for Security Education (SEED). This collection contains guided labs to explore software, web application, and network protocol vulnerabilities.

SEED also has open-ended implementation labs to add security features to the Minix and Linux kernels. If you ever wanted to write a VPN, develop your own firewall, or try a new security concept–these labs are a great start and any one of them could seed an independent study project. These labs were designed to provide a challenging end of course project. Two of these would make a very interesting semester of independent study.

How to Get Experience

If you have an idea about what you want to do while in college, then use internships, open source projects, and extra curricular activities to build up a portfolio of skills relevant to your dream job. These activities will either make you stand out to get your dream position or help you decide that the dream position isn’t so exciting.

To get involved with open source, pick a project and start doing something with it. If this is too open-ended, take a look at the Google Summer of Code Project List and see if there’s anything here that strikes your fancy.

Another opportunity is the National Science Foundation’s Research Experience for Undergraduates program. This program provides an opportunity to participate on a research project at another university over the summer.

If you’re an Air Force ROTC cadet, you should spend a summer with the Advanced Course in Engineering Cyber Security Bootcamp. This 10 week course will teach you how to write and tackle difficult problems with a computer and network security focus.

If you think you want to do services work, I recommend finding an internship with a security services company. Exposing yourself to multiple opportunities will help you decide the best place for you.

The Big Picture

A Computer Science degree generally prepares you for research. It’s not job training for developers, QA people, software engineers, etc. What you will get out of CS is a foundation. You will come to view systems as complex layers glued together by abstractions. Security problems find their way into systems when a developer fails to understand the details in a lower layer. The Computer Science foundation will help you become a person who can seamlessly think in multiple levels of abstraction and manage a lot of details at one time. This ability is necessary if you want to break or secure systems.