Saturday, 6:30pm ended my 2013 red teaming season. I’ve participated in the Collegiate Cyber Defense Competition as a red team volunteer since 2008. I love these events primarily because of the opportunity I get to interact with the student teams and learn from my peers in this field. But, since 2011, I’ve also traveled to these events with an agenda of exercising my tools, testing improvements, and getting new ideas.

2013 was the first year I had an opportunity to exercise Cobalt Strike and its capabilities at these events. CCDC exercises don’t offer a client-side attack surface, which takes some Cobalt Strike features out of play. However, it’s collaboration capabilities, Cortana scripting, Beacon agent, and the ability to manage multiple team servers are all very relevant to a CCDC red team.

I wrote about my experiences at the Western Regional Collegiate Cyber Defense Competition, now I’d like to share what happened on the National CCDC Red Team.

I showed up to San Antonio, TX exhausted. I spent last week participating in two exercises. The Mid Atlantic CCDC event and another grueling (but very challenging and fun) exercise. Once I got to San Antonio, I had dinner with my fellow red team members and I crashed out. I made it to the red team room at about 9:15am, approximately 45 minutes before go time.

This was my second year on the National CCDC Red Team. The National CCDC Red Team operates differently from the regionals. Where regionals are generally a free for all, the National team assigns two red team members to each blue team. We’re allowed to perform actions against other teams, but we must focus on our assigned team first, and we must not disrupt or step on the red team members who own that particular blue team.

When I described this model to my girlfriend, she immediately objected and stated–“that’s not fair! what happens if one team gets less skilled people assigned to them”. Hear me out, this model can work, and during the 2013 National CCDC–we provided the fairest and most balanced red experience I’ve seen at a CCDC event yet.

Preparation

I spent the 45 minutes before the event getting my initial attack kit prepped. One role I usually fill at CCDC events is the role of initial exploitation and persistence. The Red Team was assigned several IP address ranges. Our team captain, David Cowen, parceled them out by assigning each red team member with a range of addresses they could bind in the last octet of all the ranges.

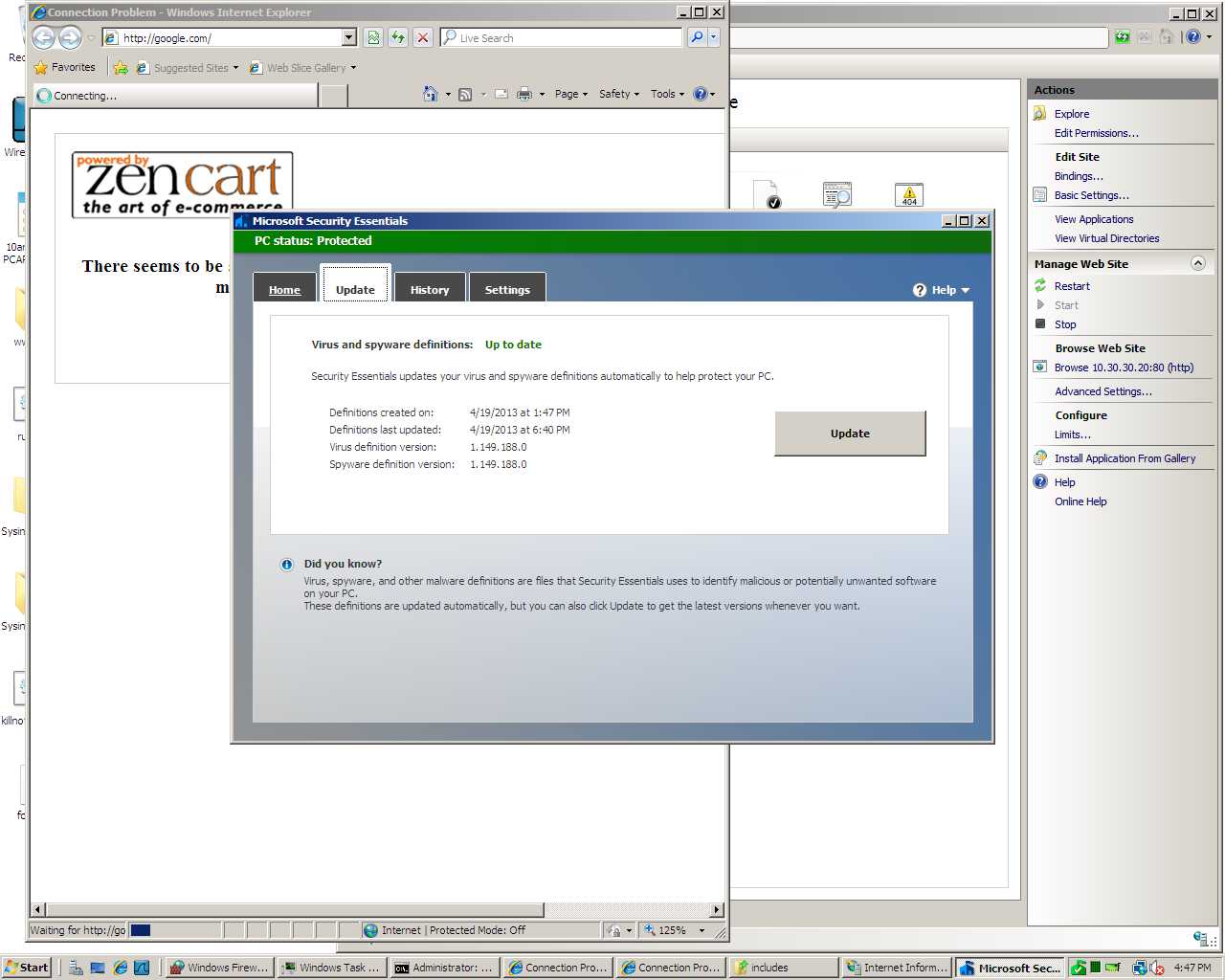

Once I knew my addresses, I loaded a Cortana script that allows me to generate my persistence artifacts with the appropriate addresses. At CCDC, student teams are allowed to install anti-virus. Unfortunately, most artifacts generated by the Metasploit Framework are caught by anti-virus. I didn’t want to make it that easy to clean us out. So, I opted to write a persistent stager for the CCDC events this year. This stager ships with several addresses embedded to it. Once it is run, it will attempt to connect to each of these addresses, one per minute, until it successfully downloads the second stage of my malware and injects it in to memory. Because this code is not in use elsewhere, no anti-virus product that I’d have to worry about at CCDC catches it.

Pro-tip: if you found any of my persistence mechanisms and ran strings against it, you would have known my staging addresses and could have blocked them. If you blocked them, you would have blocked my other backdoors that attempted to stage through the same address.

Anyways, I generated my artifacts, before I even had time to bind all of my IP addresses. I setup a local Cobalt Strike instance for the initial attack and I was getting ready to setup a team server when very suddenly, 10am came and Dave shouted “go! go! go!”.

Opening Salvo

The first minutes of any CCDC event are critical. As a red cell member, I do not see CCDC as a game of patching, installing firewalls, and thwarting an attacker who is attempting to scan and exploit you. I see CCDC as an intrusion detection and response game. I want the students to work under the assumption that an attacker is present, focus on their operational security, and develop creative ways to dig us out, spot our activity, or disrupt our command and control. Truth is, once they patch and setup a firewall–if we don’t have access, we’re likely not going to get it. Intrusions today start with the end user for a reason–these other layers of defense stop the easy stuff.

Contrary to popular belief, I no longer script my opening attack. I’ve moved away from it this year. I found at earlier events that my scripted exploitation would sometimes make assumptions that I would need to correct once I understood reality. The Armitage and Cobalt Strike user interfaces are efficient enough to allow me to think on my feet and simultaneously apply an action against all systems–very quickly.

I start most CCDC events with a db_nmap sweep. I don’t care about discovering each open service. I want the low hanging fruit only. I use nmap -sV -O -T4 --min-hostgroup 96 -p 22,445 across all student ranges to discover the easy exploitation opportunities as quickly as possible.

At National CCDC, student teams have two networks: a local network and a cloud network. This year, I opted to go after their local networks first and follow up against their cloud networks second.

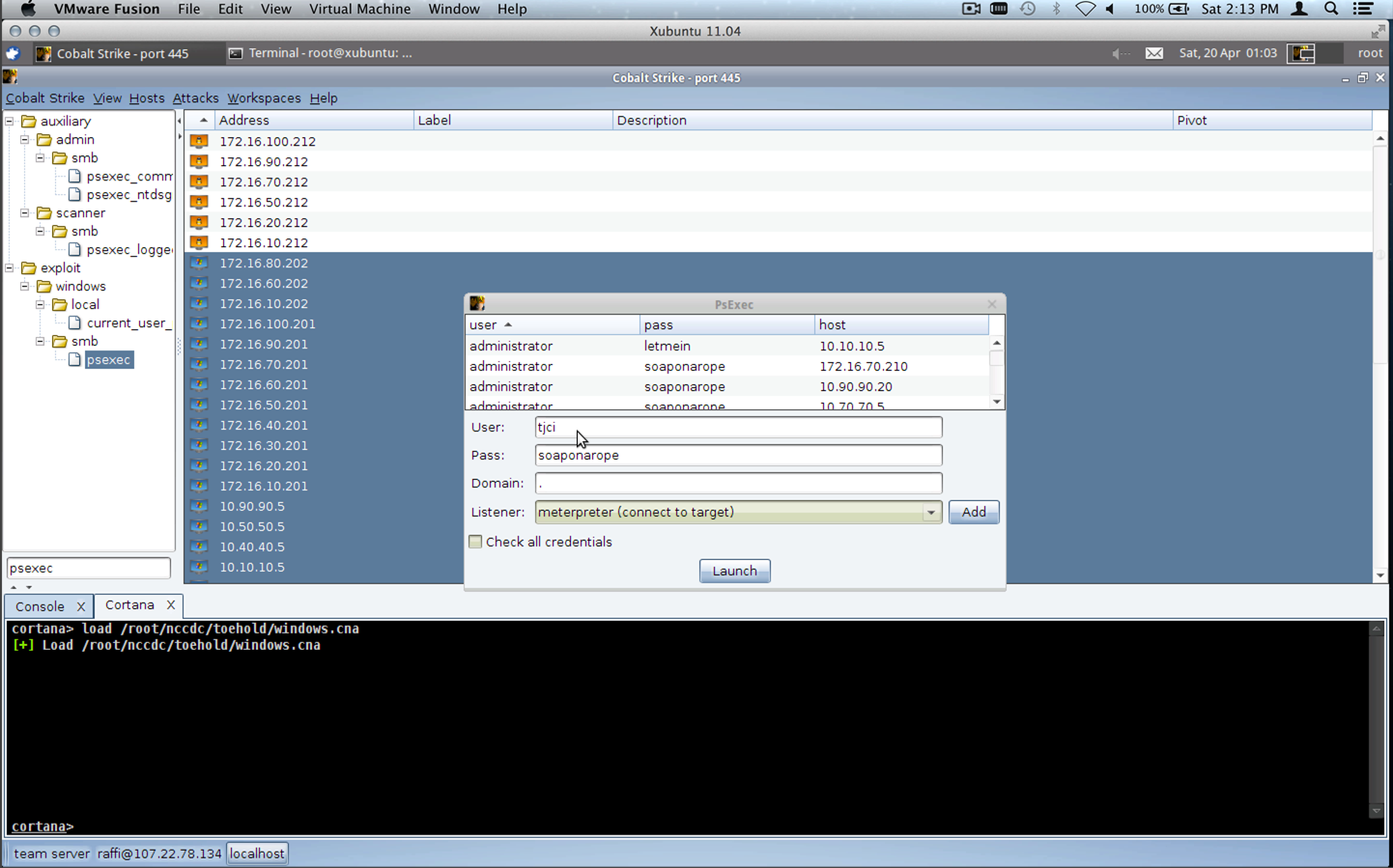

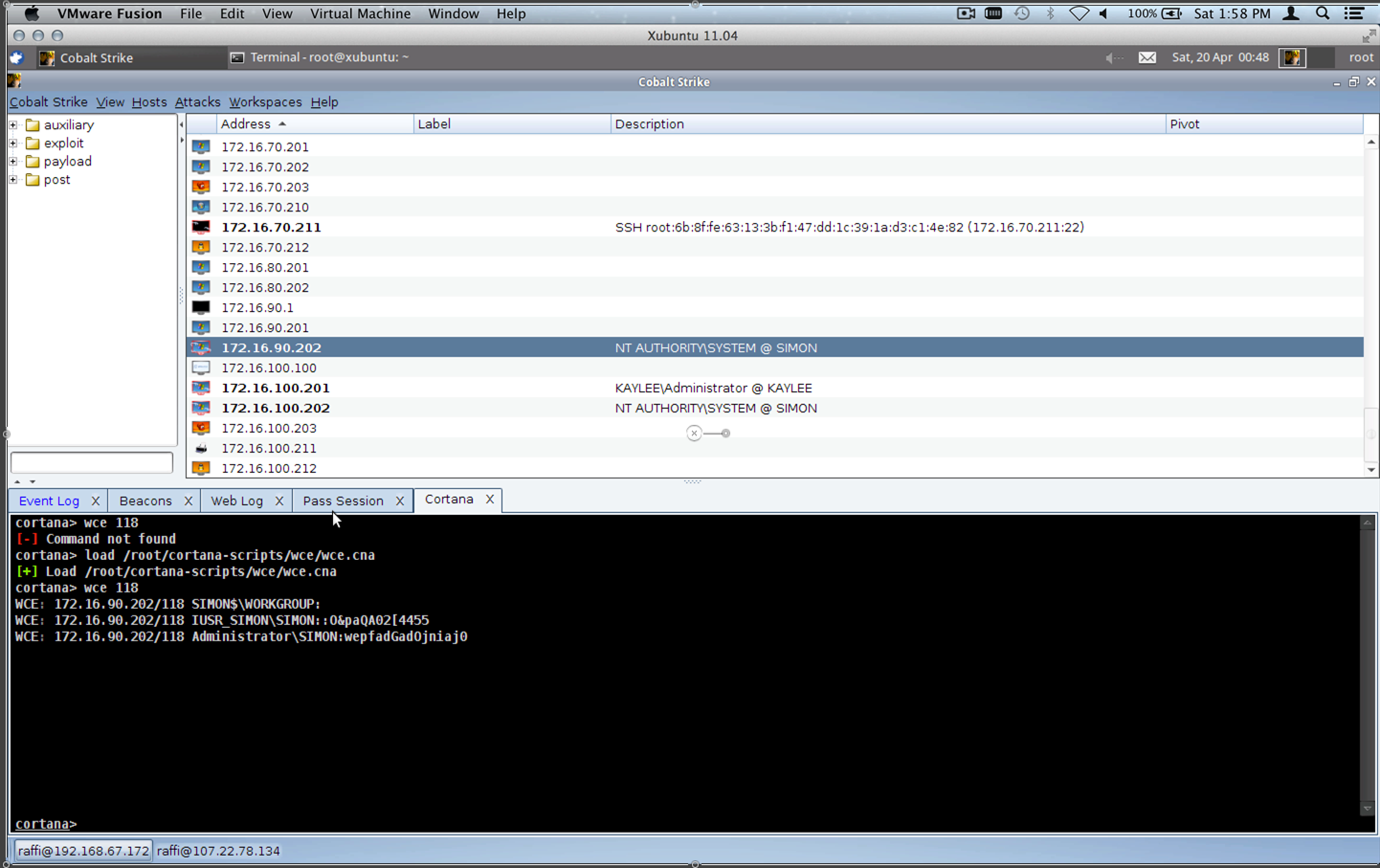

Once a scan comes back, I sort my host display by the operating system icon. I simply highlight all Windows systems and launch the ms08_067_netapi module against them. This year, due to a bar on Mubix’s worm, we were given a list of potential default passwords–for the first time in National CCDC history. I used this information to execute psexec against all of the remaining Windows hosts. If I did not have the default credentials, I would use a Cortana script to run Windows Credential Editor to get them.

As Windows sessions came in, I had a Cortana script loaded that would automatically install my beachhead executable onto the systems. The persistence mechanics were nothing new. They were very similar to last year’s Dirty Red Team Tricks talk. The beachhead executable’s only purpose was to connect to me, download Beacon, and inject it into memory.

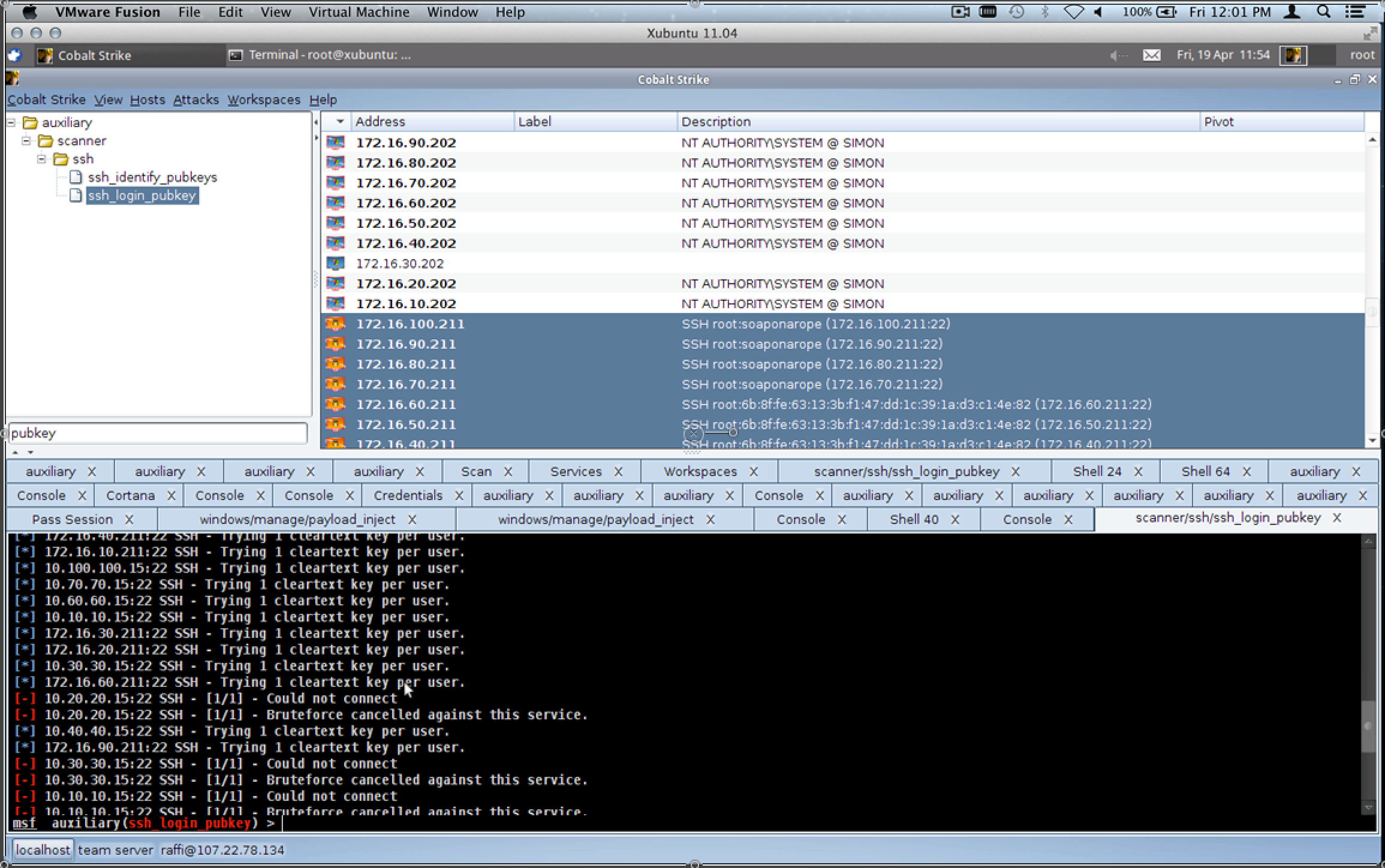

Once I had the Windows systems, I ran the Metasploit Framework’s ssh_login module against all of the UNIX systems with root and each of the suspect default credentials. Armitage and Cobalt Strike tip–hold Shift as you click Launch to run a module but keep the dialog open. This makes it really easy to try multiple variations of an attack very quickly.

Once again, I had a Cortana script loaded to automatically install some persistence on the UNIX systems. I didn’t do much to the UNIX systems at National CCDC because I did not want to step on my other red team members. I simply dropped an SSH key for root and altered the SSH configuration to allow the one key to work for any user on the system.

Team Server

After the opening salvo, I successfully exploited the Windows systems with port 445 open in the competition environment and I had root access to the UNIX systems with SSH open (except for the Solaris systems assigned to each team). This whole process took 1 to 2 minutes total. In theory, I had backdoors on each of these systems too, but I had no way to know because I had not yet setup a team server.

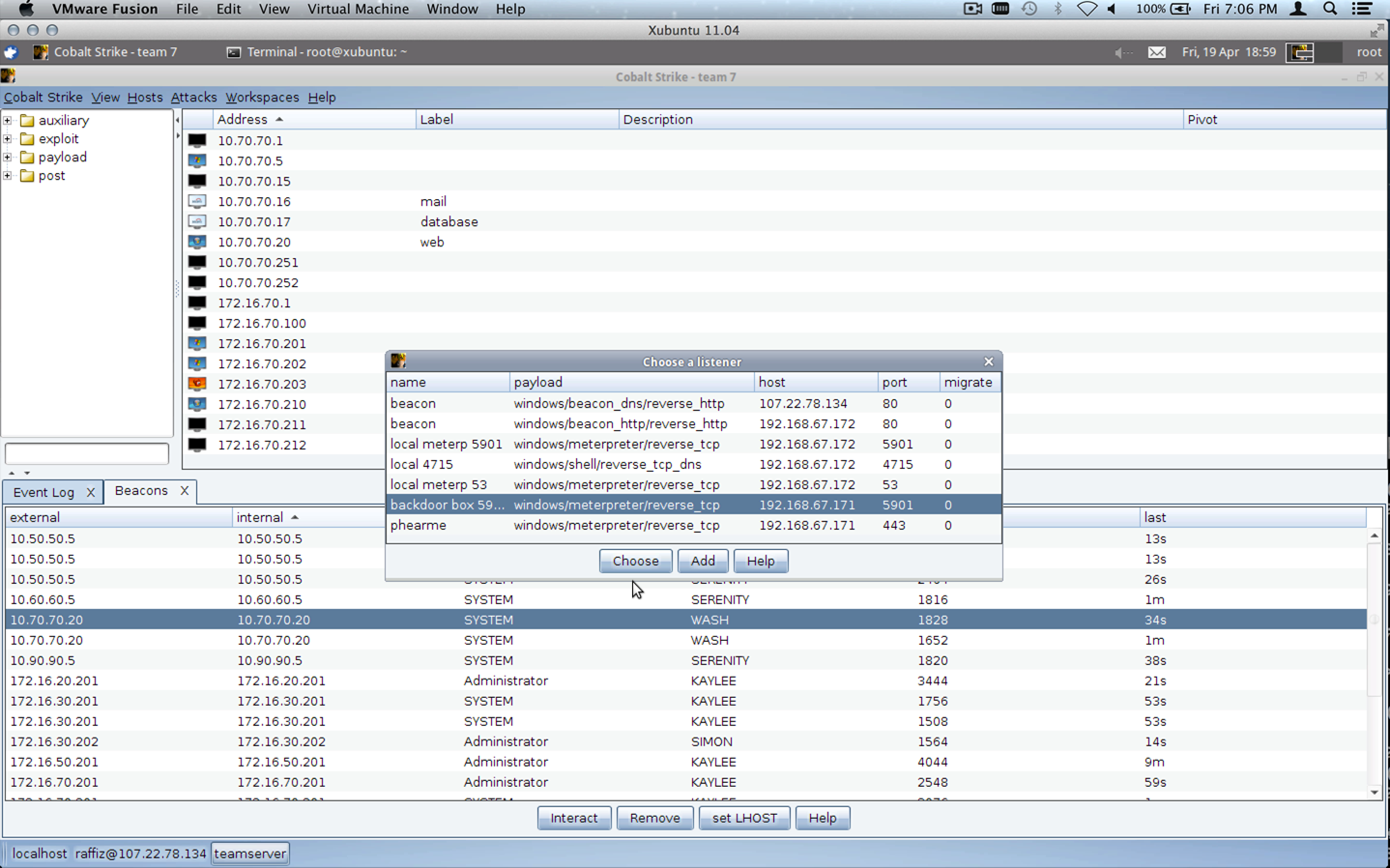

I went to work to setup a Cobalt Strike team server. Of the four staging addresses I created, I only bound one of them. Once Cobalt Strike was up, I connected my client to this team server and I setup the Beacon listener and gave it a different list of IP addresses to beacon back to.

Beacon is a Cobalt Strike-specific payload. It doesn’t require a persistent connection to the target, rather it phones home every so often to request tasks to execute. I created Beacon to act as a quiet (in memory) persistence agent. The idea is you can use it to spawn a new Meterpreter session when it’s needed. In a pinch, Beacon can also act as a remote administration tool if your Meterpreter traffic is squashed by network defenses.

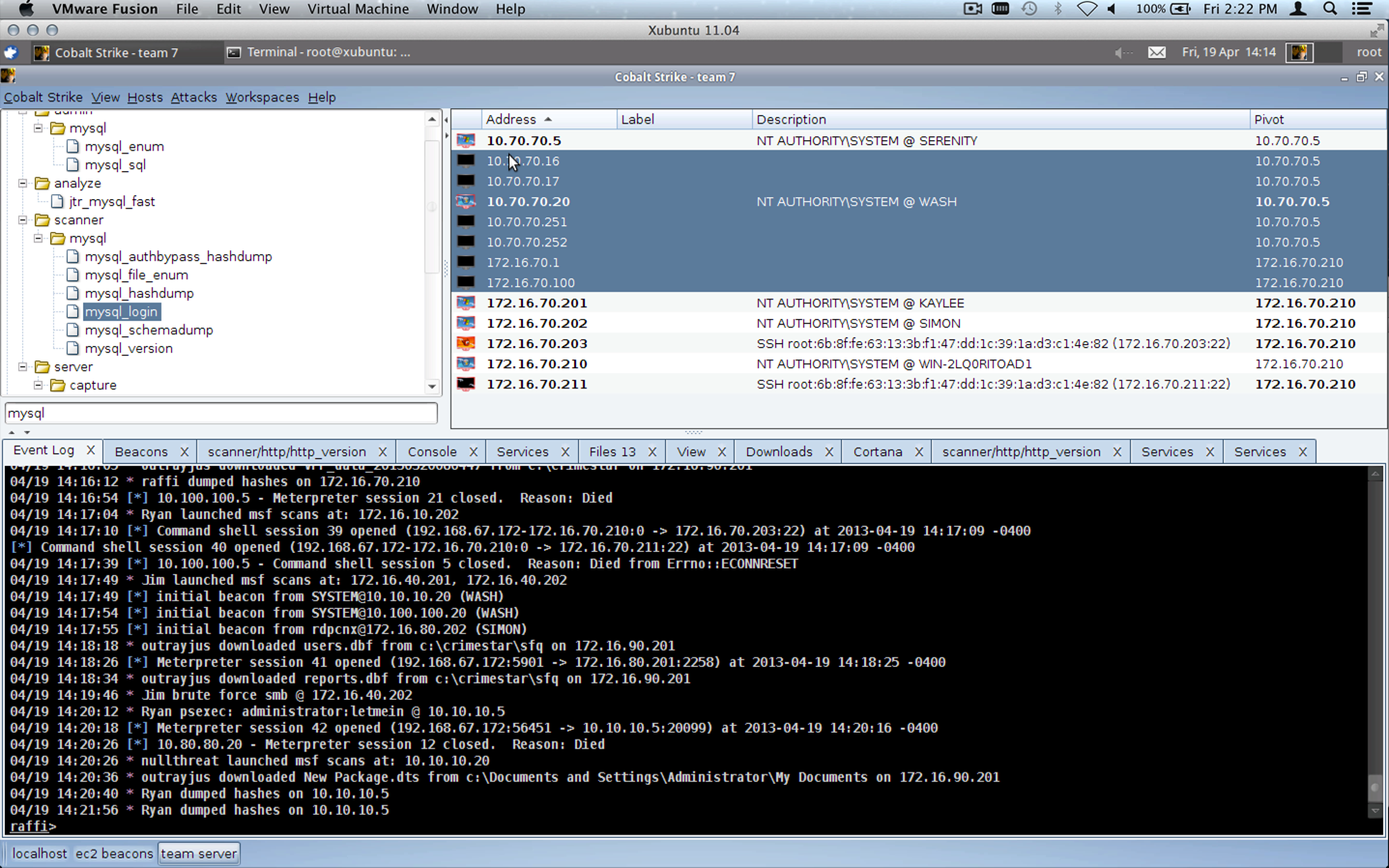

Once the listener was up, I noticed my Beacons were coming back and I was able to verify that we had all Windows systems in the competition environment at that time. This really allowed us to give students a fair game. Each team was owned, from the beginning, with the same backdoors.

Cobalt Strike Use

I then spent time getting folks, who asked for it, setup with Cobalt Strike so they could task their own Beacons. Several tools were in play on the National CCDC Red Team. I saw msfgui, msfconsole, Core Impact, Dark Comet, and Cobalt Strike. There was some Armitage too early on, but I showed those folks how they could connect Cobalt Strike to multiple Metasploit Framework instances at once and that did away with that.

8 out of 10 blue teams had at least one red team member using Cobalt Strike to conduct post-exploitation and gain more access into their network. By my count, 15 out of 20 red cell members were using Cobalt Strike. 12 of the 20 red team members used only Cobalt Strike–primarily through the local team server without any other penetration testing platform in use. In effect, 8 simultaneous engagements were happening through one team server. Wow!

The workspaces feature helped a lot with this. Each Cobalt Strike user was able to define a workspace that showed them only the hosts, services, and sessions for their team.

As a developer, nothing excites me more than seeing someone use a tool I wrote. I’m very honored that so many well respected professionals in this field gave Cobalt Strike’s toolset a try during the National CCDC event.

Other Tools

Some custom stuff was in use during National CCDC. We had a custom Linux backdoor, something that works a lot like Beacon deployed to student systems. We also used Dark Comet to further fortify our access to student systems once the initial salvo was complete. Individually, a few red team members chose to deploy different RATs against their specific team, but I’m not aware of anything else that was done on an all teams basis.

We were also using a data management system developed by Alex Levinson, Maus, and Vyrus to keep track of shared information and automatically track red activity, based on a Metasploit Framework instrumentation plugin. My favorite part of the whole system–it integrates etherpad and I’m in love with etherpad for red team information sharing. It’s much better than a wiki.

Tempo

Once we were in, post-exploitation was up to each individual cell. Knowing that we had equal access and persistence across all teams, I greatly enjoyed the opportunity to focus on one team. The first day, our job as the red team was to stay in and quietly steal data. We were under strict instructions to not do anything that might reveal our presence. I spent the first day setting up keystroke loggers, downloading interesting files, taking screenshots, and occasionally sweeping the network to try to get access to other hosts that the initial salvo didn’t give us.

At the start of day 2, we still had access on Windows systems on all team’s cloud networks. We also had access to at least one box on most of the team’s local networks. Some systems were beaconing to our local team server, a few were beaconing over DNS to a node in Amazon’s elastic computing cloud. The National CCDC event required teams to configure a proxy on each Windows system for it to connect to the internet. This didn’t happen on all systems, limiting my external Beacons. The second pool of accesses was still helpful in some cases though.

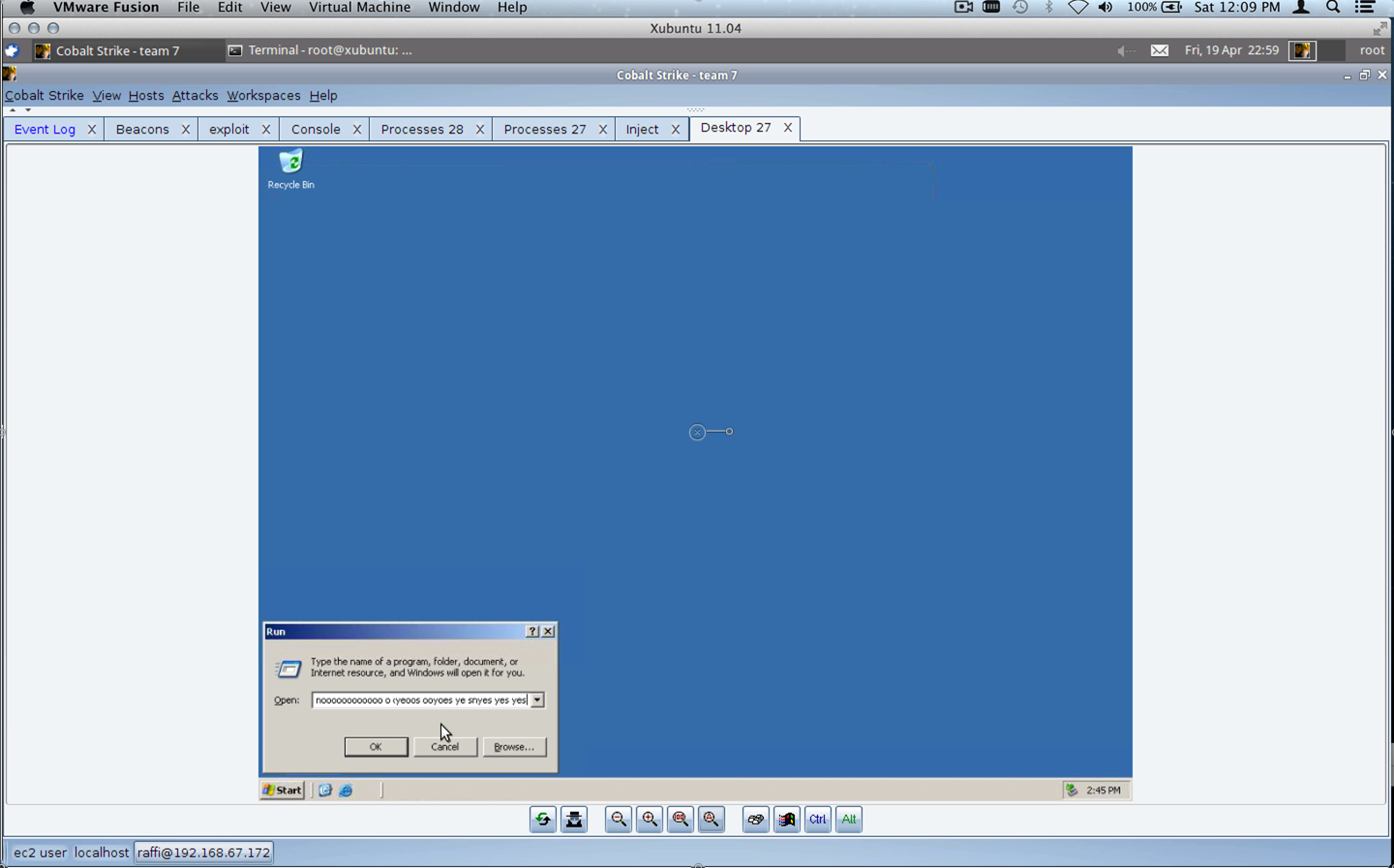

On day two, our team captain started blasting some classical music and instructing us to burn all of our boxes. The idea–get in on day 1, stay there, let the students snapshot their virtual machines with our backdoors, let them trust their snapshots, and on day 2–destroy their systems. We bounced systems for the first few hours of the day. We would jump on, destroy it, the students would restore it, our beacons would phone home, we’d request a meterpreter session, and then we’d destroy the system again.

<in a run window> me:Noooooooooooo RedTeam:yes yes yes yes. #nccdc #blueteamprobs @armitagehacker pic.twitter.com/PDNJCap15t

— Hadley (@hadley1210) April 21, 2013

This happened all throughout the morning. As a person who likes to keep access until the end, this was scary. Students were put into a catch-22 situation. They could revert to a snapshot with all of the work they did to the system + our backdoors or they could revert to a clean image. By the end of the morning, many teams opted to revert to the clean image.

We were able to re-exploit systems hosted in the student’s cloud networks when they were reverted to a clean image and rebackdoor them. That part was pretty easy. As the day went on, one red cell member might make a discovery and call everyone else’s attention to it. We would then work on replicating that discovery in our environment.

For example, Matt Weeks discovered a webshell pre-implanted by the competition organizers on an internal system. All of us found the webshell on our teams and went to work through it. In the default configuration, this webshell existed on Windows systems giving us access to internal networks for some of the teams. By this time, access to internal networks was a nice find. We bounced student systems so many times, that the teams reverted to a clean snapshot for their internal systems.

My team had migrated their web server from Windows to Ubuntu Linux. Fortunately, they kept the webshell with the migrated site giving us access to that system as well.

Each red team member had a good understanding of the point system. We knew, for example, that a root/administrator level intrusion counted once and only once per unique attack vector. There was no point in exploiting systems time and again with the same thing.

We also knew that credit cards and other data flags were worth points.

One of the biggest hits we could make a team take came from publishing credit card information to their website for the whole world to see. We made sure to make this happen for all teams, where it was possible.

Overall, the plan worked. We didn’t achieve Dave’s life long dream of seeing every team down for every service across the board. But, we were very well organized, we collaborated, and this year we gave the students at the National CCDC event the fairest and most balanced red experience yet.

Congratulations to RIT on its first National CCDC win. Congratulations to Dakota State University on a very close second place finish.

See also: